This post was written by Matthew Skelton co-author of Team Guide to Software Operability.

Deployability is now a first-class concern for databases, and there are several technical choices (conscious and accidental) which band together to block the deployability of databases. Can we improve database deployability and enable true Continuous Delivery for our software systems? Of course we can, but first we have to see the problems.

Until recently, the way in which software components (including databases) were deployed was not a primary consideration for most teams, but the rise of automated, programmable infrastructure, and powerful practices such as Continuous Delivery, has changed that. The ability to deploy rapidly, reliably, regularly, and repeatedly is now crucial for every aspect of our software.

This is part 3 of a 7-part series on removing blockers to Database Lifecycle Management that was initially published as an article on Simple Talk (and appearing here with permission):

- Minimize changes in Production

- Reduce accidental complexity

- Archive, distinguish, and split data

- Name things transparently

- Source Business Intelligence from a data warehouse

- Value more highly the need for change

- Avoid Production-only tooling and config where possible

The original article is Common database deployment blockers and Continuous Delivery headaches

Lack of data warehousing and archiving

Sheer data size can also work against deployability. Large backups take a long time to run, transmit, and restore, preventing effective use of the database in upstream testing. Moving from eight hours for a backup/transmit/restore cycle to even 30 minutes makes a huge difference in a Continuous Delivery context, particularly when you include a few retries in the process. In some of the post-Dotcom systems I mentioned above, around 70-80% of the data held in the core transactional database was historical data that was rarely requested or used, but was preventing the rapid export/restore of data that would have led to effective testing.

Remedy: Archive, distinguish, and split data

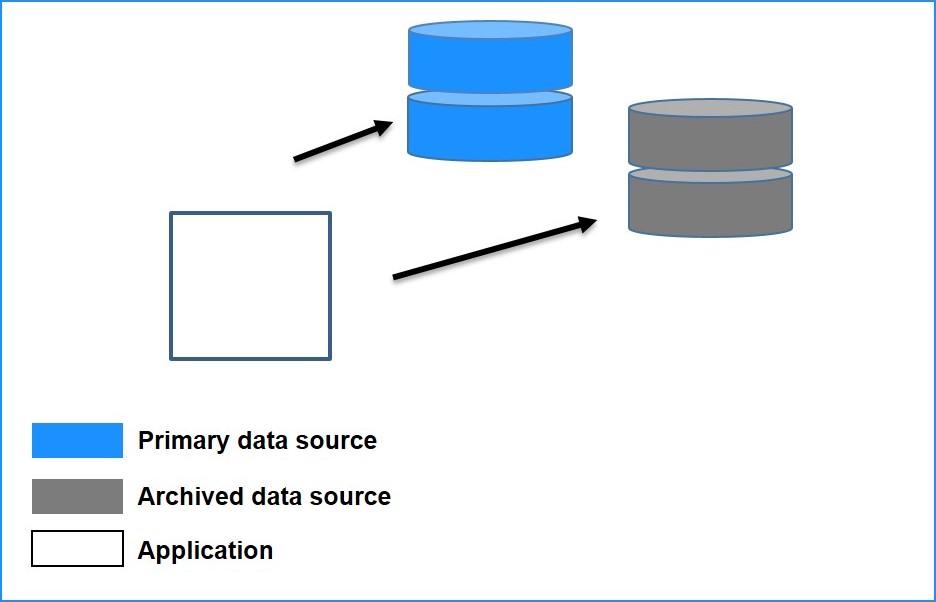

This is one of the issues which is, at least at first glance, more pertinent to databases than many we’ve discussed (certainly more painful in many cases.) Applications (and developers) need to be more ‘patient’ when working with data; we should not expect to have all data readily to hand, but instead appreciate that data should, in fact, be archived. Working in a more asynchronous, request-and-callback fashion has the benefit of allowing smaller ‘Live’ databases, making restoring and testing on real data easier and quicker. By archiving rarely-requested data, we can drastically reduce the size of our ‘data space’ to something that more accurately reflects user demand. Of course, applications then need to be updated to understand that ‘live’ data is held only for (say) 9 or 12 months (depending on business need), and so to treat ‘live’ and historical data as two discrete sets.

In practice this might mean performing an asynchronous, lazy load for historical data (archived to secondary or tertiary location and rehydrated for the user when requested), but a synchronous, greedy load for ‘live’ data. This live vs. historical approach also matches human expectations, where ‘retrieving from an archive’ is naturally understood to take longer, so your users shouldn’t be up in arms.

Of course, we still need to distinguish between data that correctly belongs in a large central database, and data that has been added out of laziness or mere convenience, and store them separately. Once we’ve managed to split data along sensible partitions, we can also begin to practice polyglot persistence and use databases to their strengths: a relational database where the data is relational, a graph database where the data is highly connected, a document database where the data is more document-like, and so on.

Fortunately, there are tried and tested patterns for building software systems that do not have single central data stores: Event Sourcing, CQRS, and message-queue based publish/subscribe asynchronous designs work well and are highly scalable. The ‘microservices‘ approach is another way to improve deployability, and is deep enough to warrant its own article later on.

These approaches are not easy, but the requirement for rapid and reliable changes (for which Continuous Delivery is a good starting point) already makes the problem domain more tricky, so we should expect an increase in the required skills.

Read more about Database Lifecycle Management in this eBook co-authored by Grant Fritchey and Matthew Skelton.

Read more about Database Lifecycle Management in this eBook co-authored by Grant Fritchey and Matthew Skelton.