This post was written by Rich Bosomworth.

Discover the acclaimed Team Guides for Software – practical books on operability, business metrics, testability, releasability

This is the third post in an on-going series exploring Rancher Server deployment, configuration and extended use. In the last post I detailed how to implement single node resilience in AWS for Rancher Server. In this post, we will add an extra level of HA by configuring an AWS EFS volume for shared storage and mounting it using the Rancher-NFS service. By using EFS to maintain data consistency the storage component is preserved outside of the Rancher environment, whilst mounting it using Rancher-NFS delivers zone resilience. Content is aimed at those familiar with AWS concepts and terminology and is best viewed from a desktop or laptop.

Series Links

Container Clustering with Rancher Server (Part 2) – Single Node Resilience in AWS

Container Clustering with Rancher Server (Part 3) – AWS EFS mounts using Rancher-NFS

Build Architecture

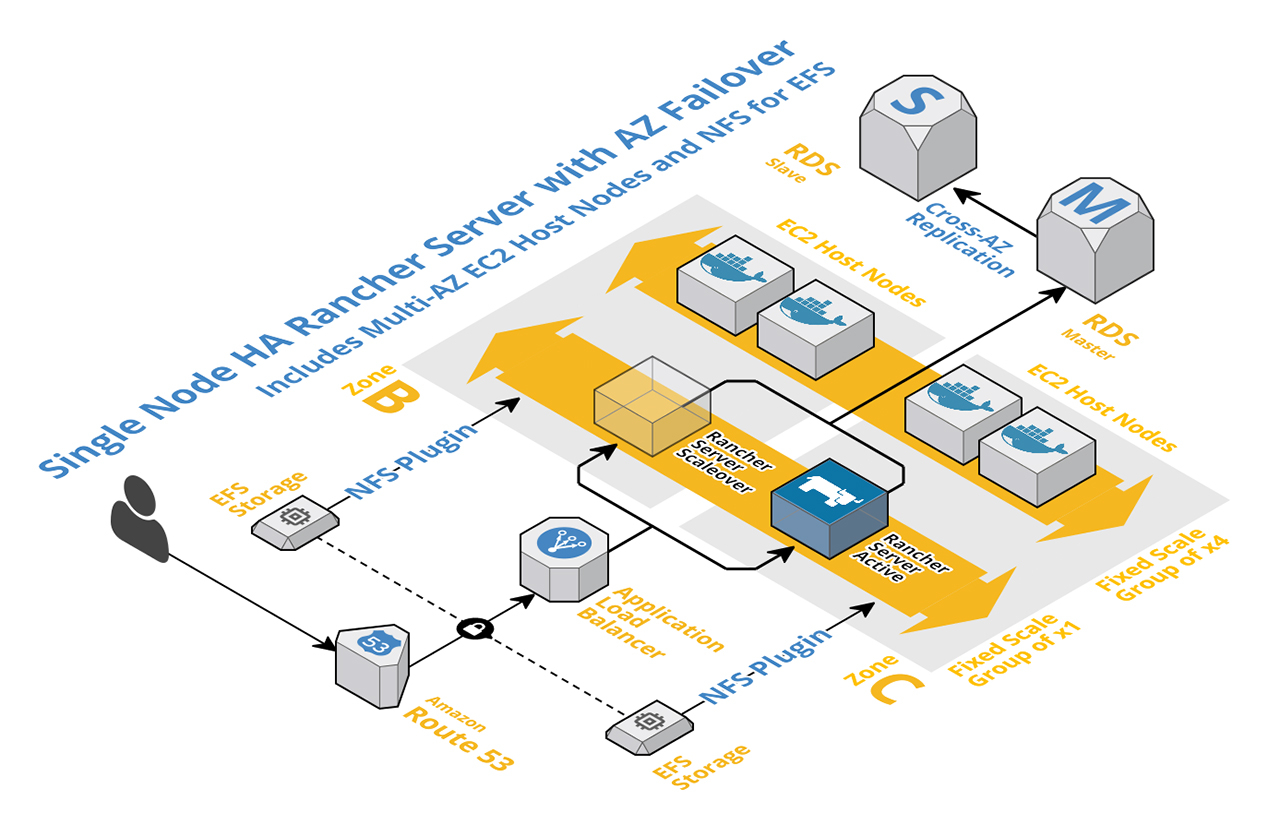

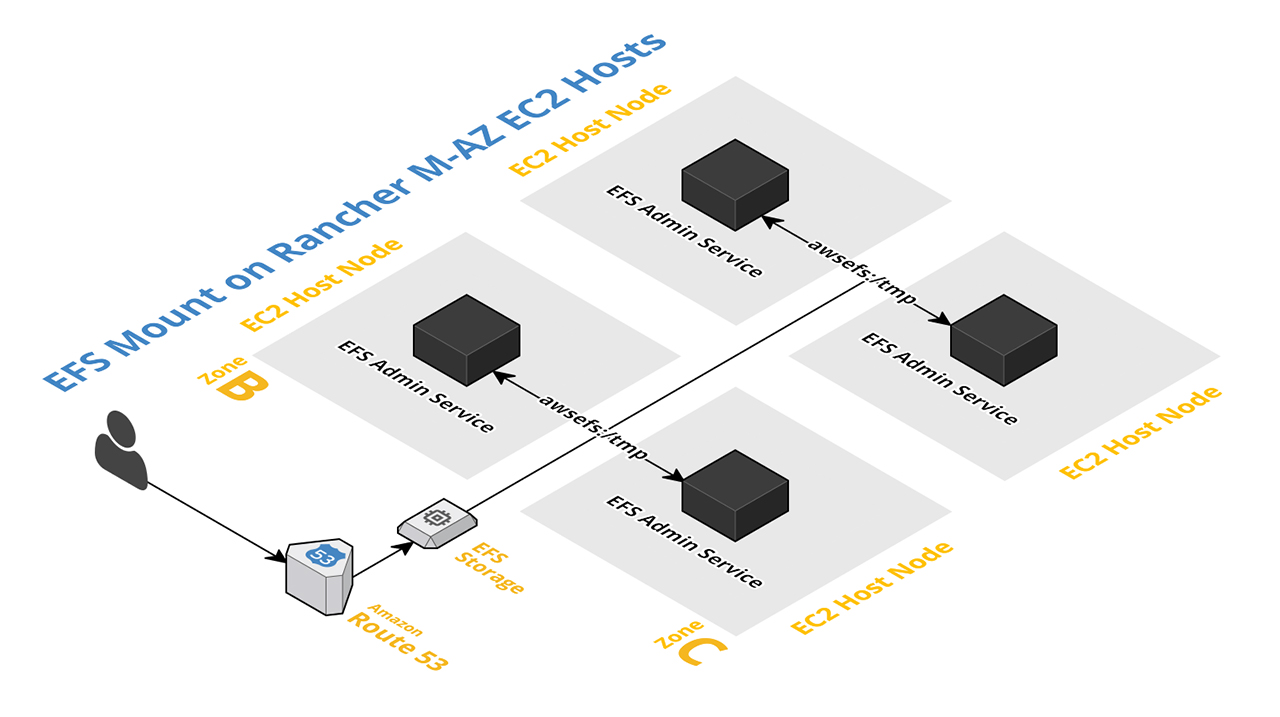

Fig:1 represents the top level build architecture referenced in this post. It is an extension of the architecture used in Part 2 and includes an additional auto-scaling group for the EC2 Host Nodes, as well as the Rancher Plugin for NFS.

Fig:1

Build advisory

This is not a post created to detail VPC, subnet and security group best practices. AWS resources used were configured at a basic level to demonstrate installation and functionality of the Rancher-EFS service only and are since destroyed. To run Rancher-NFS for EFS in a production environment a more detailed and secure level of VPC, subnet and security group configuration would be recommended, with all resources inside the same VPC.

Create the EFS volume

From within the AWS Console access the EFS service.

*NOTE* – At the time of writing AWS EFS is only supported in the following regions: US-East (N.Virginia), US-East (Ohio), US-West (Oregon) and EU-West (Ireland).

Stage one – Configure file system access (Fig:2). In the example we have selected x2 public subnets and the default security group.

Fig:2

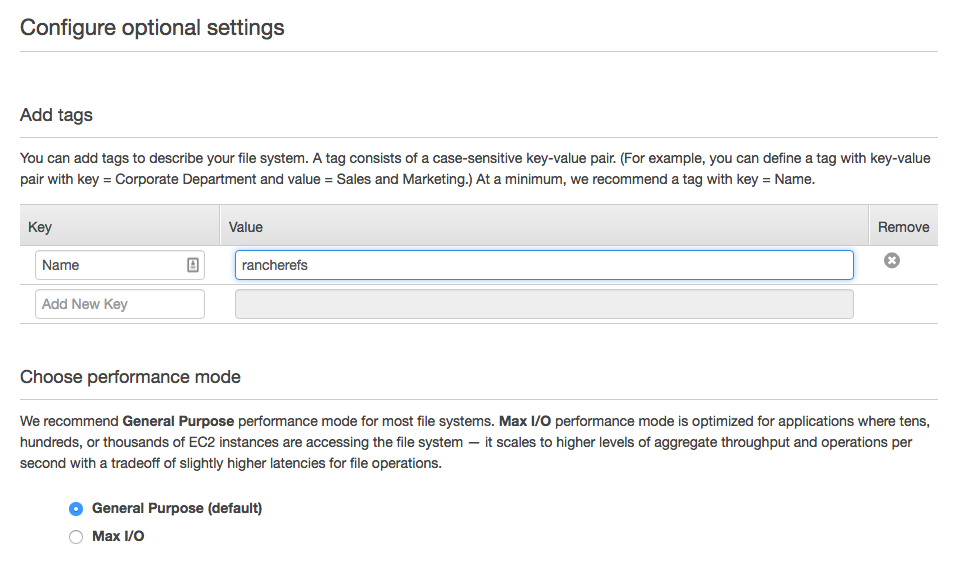

Stage two – Configure optional settings (Fig:3)

Fig:3

Stage three – Review and create (Fig:4)

Fig:4

While the EFS volume is being created, add an entry for port 2049 (NFS) to the EC2 security group used by the EFS volume (Fig:5). For our demonstration we created the EFS volume in a different VPC to our Rancher build and used the default security group with an open entry for access. In a working environment all resources should be placed within the same VPC and an EFS specific security group locked down to Rancher server and host groups.

Fig:5

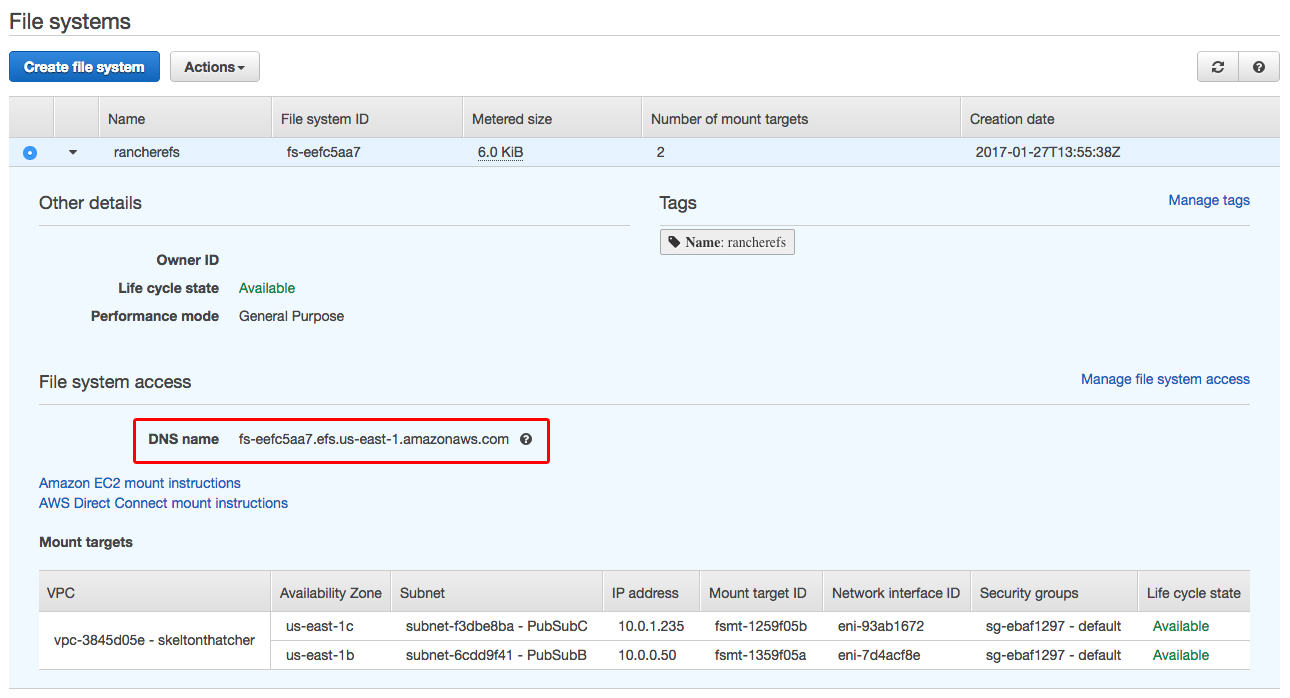

With the EFS volume created, make a note of the DNS name (Fig:6). Previously, EFS mount points were zone specific, the DNS name is a recent addition and now provides a single entry point for mounting multi-AZ file systems.

*NOTE* – In order to resolve the EFS DNS name all connecting resources must be using AWS DNS. This is default for AWS resources within a VPC.

Fig:6

Install Rancher-NFS

*IMPORTANT* – Our extensive testing proved Ubuntu Linux to be the best option for hosts to support Rancher-NFS. Amazon Linux is NOT supported.

The Rancher-NFS service item can be found within the storage category of the Rancher catalog (Fig:7).

*NOTE* – Although connecting to AWS EFS, we are using Rancher-NFS, NOT Rancher-EFS. Rancher-NFS facilitates a single entry point for our multi-AZ file system, i.e. the EFS DNS Name. The EBS plugin creates non-shared zone specific EBS volumes attached to zone specific hosts.

Fig:7

Install the Rancher-NFS stack using the EFS DNS name as the IP or hostname of the NFS server (Fig:8). The mount point we specify as the root of the volume ( / ), with no mount options and the NFS version left as default (nfsvers=4). Click Launch to add the stack.

Fig:8

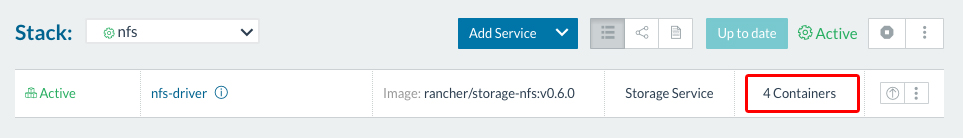

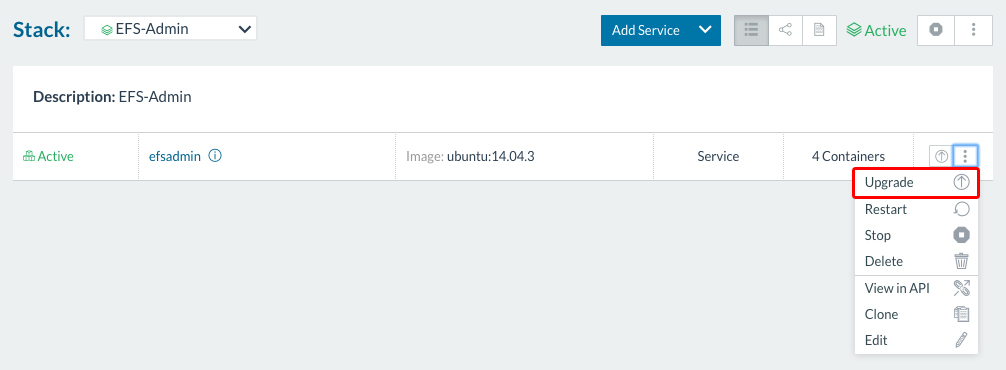

Once the stack is launched its active state can be viewed, upgraded, and other services added. The stack state example for our Rancher-NFS service (Fig:9) shows x4 containers as there are x4 hosts running and the stack service is running on each host (Fig:10).

Fig:9

Fig:10

Configure stacks for Rancher-NFS

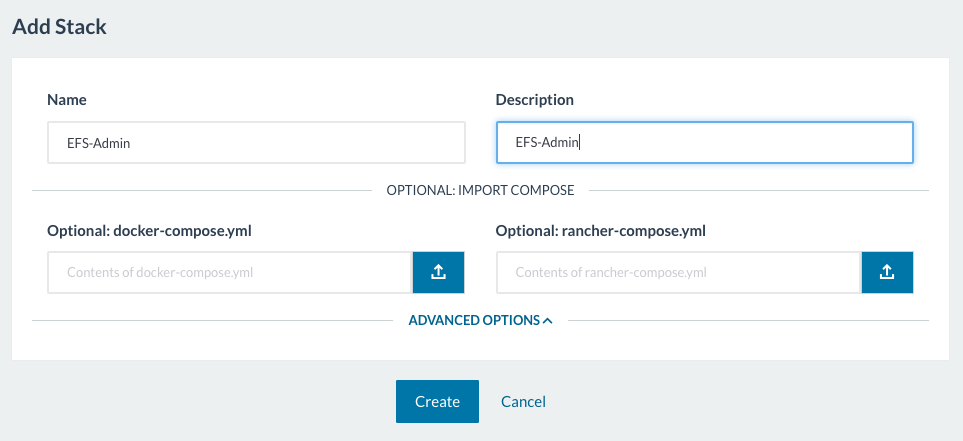

To demonstrate EFS connectivity and HA we can create a simple stack and add an Ubuntu 14.04 service item (Fig:11 & Fig:12).

*NOTE* – To clarify, Ubuntu 14.04 is the container version we are using to create the example stack, whereas we are using Ubuntu 16.04 as the advised OS for the underlying hosts (i.e. the EC2 AMI). We chose Ubuntu 14.04 as the option to do so is populated by default. You could use another OS version for the stack.

Fig:11

Fig:12

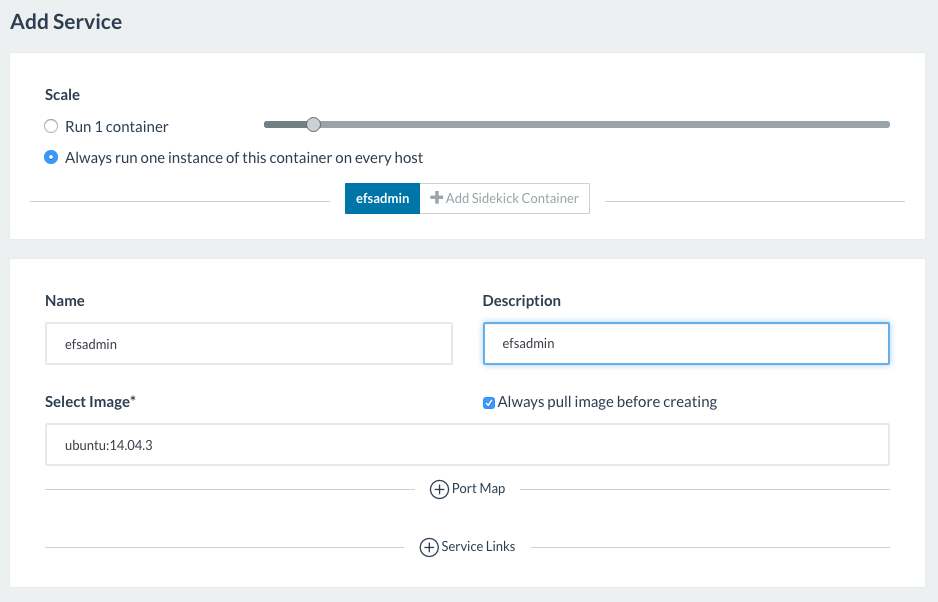

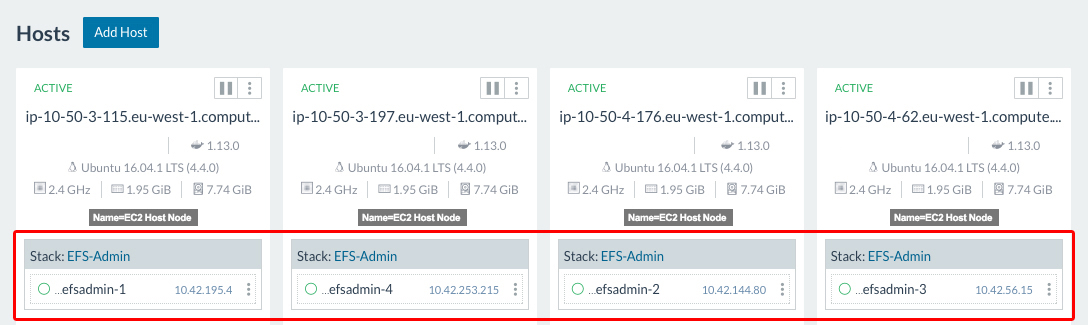

With the stack created we can verify it is running on all our hosts (Fig:13).

Fig:13

Access the upgrade service for the stack you wish to mount to EFS, in our example this is the EFS-Admin stack we created in the previous stage (Fig:13)

Fig:14

Navigate to the volumes tab. Click ‘Add Volume‘ and enter a combined mount name and mount location within the container (i.e. awsefs:/tmp). The name of driver is rancher-nfs (Fig:15).

Fig:15

When the stack shows as upgraded, click Finish Upgrade (Fig:16).

*NOTE* – The ‘Finish Upgrade’ stage applies to any stack service you modify and is required in order for the service to be in a fully active state.

Fig:16

From the Infrastructure > Storage menu the active mounts will created and visible under Storage Drivers (Fig:17)

Fig:17

The EFS storage volume is now mounted via NFS to the EFS Admin Service on each of the EC2 Host Nodes (Fig:18)

Fig:18

Testing EFS HA

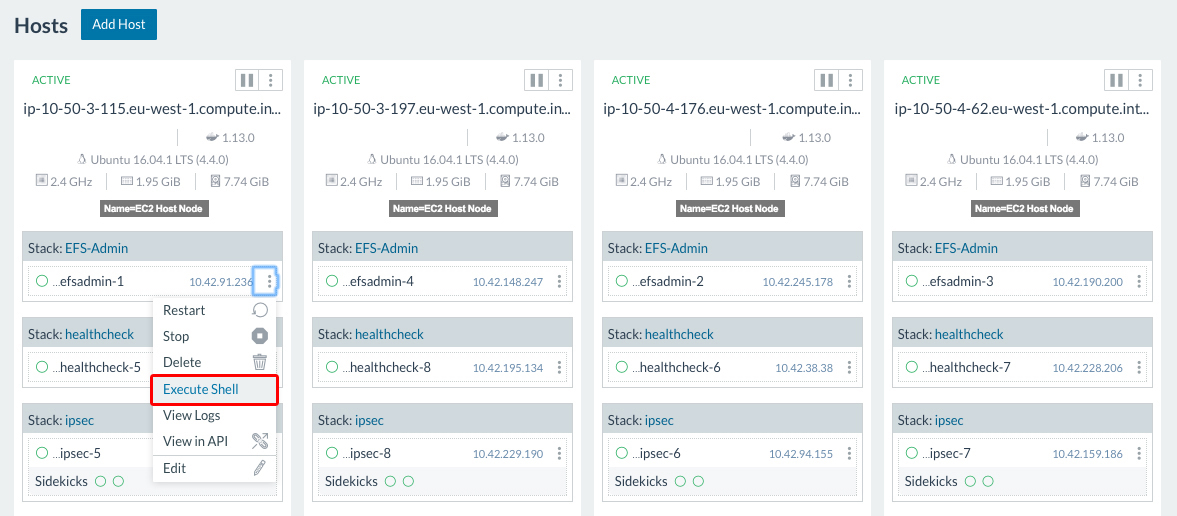

From within the Rancher hosts console, select a host and execute a shell from the stack you have configured to use Rancher-EFS. With our example the stack is EFS-Admin (Fig:19).

Fig:19

Navigate to the mount point within the file system (i.e. /tmp) and create a testfile (Fig:20)

Fig:20

Close the shell window. Open a shell on another host, navigate to the same mount point and verify that the file has been created (Fig:21)

Fig:21

If you can see the new file, then you have successfully configured your AWS EFS mount via the Rancher-NFS service. Well done..!

Should you require advice or assistance with any aspects of this post, or if you would like to discuss Rancher, AWS or other areas of DevOps as detailed elsewhere on the website we would be more than happy to hear from you. Please get in touch either via the comments section on this post or via the contact page.